Voice technology is changing the way we interact with our devices.

Siri points out your next turn in an unfamiliar town. Google Assistant scours the internet for directions on grilling salmon, and reads them to you while you work. The AI voicebot at the other end of the customer service line gets you results, without waiting or push-button menus. Call it the age of conversational computing—and the computer’s end of these conversations comes courtesy of a digital technology called text to speech, or TTS for short.

But TTS isn’t just for fancy new voice computing applications. For years it’s been used as an accessibility tool; as educational technology (edtech); and as an audio alternative to reading. Over half of U.S. adults have listened to audiobooks, and TTS helped make those experiences possible. All these examples just scratch the surface of what TTS can do, but the details of the technology remain unfamiliar to many.

In this article, we’ll answer the question, “What is TTS?” Then we’ll discuss how individuals and businesses use synthetic voice tools, before concluding with a brief history of the technology.

Table of Contents

Curious what today’s leading TTS actually sounds like?

Explore ReadSpeaker’s TTS voices

Introducing Text to Speech: The Meaning and Science of TTS

Your definitive introduction to TTS technology starts with a fundamental question:

What is TTS? In other words, what does TTS mean?

Text-to-speech technology is software that takes text as an input and produces audible speech as an output. In other words, it goes from text to speech, making TTS one of the more aptly named technologies of the digital revolution. The software that turns text into speech goes by many names: text-to-speech converter, TTS engine, TTS tool. They all mean the same.

Regardless of what you call it, a full TTS system needs at least two components: the software that predicts the best possible pronunciation of any given text, and a program that produces voice sound waves; that’s called a vocoder.

Text to speech is a multidisciplinary field, requiring detailed knowledge in a variety of sciences. If you wanted to build a TTS system from scratch, you’d have to study the following subjects:

- Linguistics, the scientific study of language. In order to synthesize coherent speech, TTS systems need a way to recognize how written language is pronounced by a human speaker. That requires knowledge of linguistics, down to the level of the phoneme—the units of sound that, combined, make up speech, such as the /c/ sound in cat. To achieve truly lifelike TTS, the system also needs to predict appropriate prosody—that includes elements of speech beyond the phoneme, such as stresses, pauses, and intonation.

- Audio signal processing, the creation and manipulation of digital representations of sound. Audio (speech) signals are electronic representations of sound waves. The speech signal is represented digitally as a sequence of numbers. In the context of TTS, speech scientists use different feature representations that describe discrete aspects of the speech signal, making it possible to train AI models to generate new speech.

- Artificial intelligence, especially deep learning, a type of machine learning that uses a computing architecture called a deep neural network (DNN). A neural network is a computational model inspired by the human brain. It’s made up of complex webs of processors, each of which performs a processing task before sending its output to another processor. A trained DNN learns the best processing pathway to achieve accurate results. This model packs a lot of computing power, making it ideal for handling the huge number of variables required for high-quality speech synthesis.

The speech scientists at ReadSpeaker conduct research and practice in all these fields, continually pushing TTS technology forward. The TTS development team produces lifelike TTS voices for brands, organizations, and application developers, allowing companies to set themselves apart across the Internet of Voice, whether that’s embedded on a smartphone, through smart speakers, or on a voice-enabled mobile app. In fact, TTS voices are emerging in an ever-expanding range of devices, and for a growing number of uses (and users).

Who Uses TTS? Understanding TTS Use Cases

People with visual and reading impairments were the early adopters of TTS. It makes sense: TTS eases the internet experience for the 1 out of 5 people who have dyslexia. It also helps low literacy readers and people with learning disabilities by removing the stress of reading and presenting information in an optimal format. We’re progressing toward a more accessible internet of the future, and TTS is an essential part of that movement.

Already, many forward-minded content owners and publishers offer TTS solutions to make the web a place for all. Businesses and buildings are required to provide entryways for wheelchair users and those with limited mobility. Shouldn’t the internet be accessible for everyone, too? Yet, as technology evolves, so have the uses and the users of TTS.

Here are just a few of the populations benefitting from TTS technology already:

1. Students

Every student learns differently. An individual may prefer lessons presented visually, aurally, or through hands-on experience. Many learners retain information best when they see and hear it at once. This is called bimodal learning.

A popular education framework called Universal Design for Learning (UDL) recommends bimodal learning to help every student be successful. Teachers of all grade levels who promote UDL use a combination of auditory, visual, and kinesthetic techniques with the help of technology and adaptable lesson plans.

Even if you identify as a kinesthetic or visual learner, science says adding an auditory method may help you retain information. And if nothing else, TTS makes proofreading a lot more manageable.

2. Readers on the Go

When you want to catch up on the news, podcasts and audiobooks only take you so far. So, if there’s an in-depth profile in The New Yorker or a longform article from The Guardian that you want to read, TTS can read it to you. That frees you up to drive, exercise, or clean at the same time. Or you may just prefer listening over reading.

3. Multitaskers

The shortcuts TTS can provide are endless—from reading recipes while you cook to dictating instruction manuals when assembling furniture. The only limit to how much it can help is your own imagination.

4. Mature Readers

Understandably, older adults may want to avoid straining their eyes to read the tiny text on a smartphone. Text to speech can alleviate this issue, making online content easy to consume regardless of your skill with technology or the state of your vision.

5. Younger Generations

Offer technology to young people, and they’re likely to use it—whether it’s strictly “necessary” for them or not. In 2022, 70% of 18 to -25-year-old consumers turned on subtitles while viewing video content “most of the time,” not because they had hearing impairments, but because it was convenient. And so many Tik Tok users took advantage of the app’s TTS feature that rival Instagram rolled out their TTS in 2021.

Meanwhile, a survey of college undergraduates found that only 5% of respondents had a disability necessitating the use of assistive technology—but at least 18% of the students considered each technology “necessary.” The point is, Generation Z uses TTS not just as an accessibility tool, but as a preference.

6. Readers With Visual Impairments or Light Sensitivity

Older adults aren’t the only ones who want to avoid straining their eyes on screens. Many people have mild visual impairments or suffer from sensitivity to light. Think of people with chronic migraines, for instance. Thanks to TTS, these users can be more productive on days when staring at screens seems like a pain too much to bear.

In fact, medical studies show that exposure to light at night, particularly blue light from screens, has adverse health effects. It not only disrupts our biological clocks, but it may increase the risk of cancer, diabetes, heart disease, and obesity rates. Text to speech offers users a safer way to consume written content, without staring at a screen.

7. Foreign Language Students

Studies show that listening to a different language aids students in learning the new dialect. Text to speech can help with that. ReadSpeaker is an international TTS software company, featuring over 50 languages and more than 150 voices, all based on native speakers.

With ReadSpeaker, foreign language students can get a feel for pronunciation, cadence, and accents. One feature that’s especially helpful in this regard is the ability to have words highlighted as they’re read aloud, which can help students feel confident in their pronunciation of new vocabulary.

8. Multilingual Readers

New generations raised in multilingual households may understand their parent’s language, but they may not feel fluent enough to read, write, or speak it. This is common in many communities, where the home language is not studied in school. For second and third generations who want to maintain or strengthen their bonds to their mother lands, ReadSpeaker can make articles, newspapers, and other literature accessible and understandable through speech.

9. People With Severe Speech Impairments

A speech-generating device (SGD), also known as a voice output communication aid (VOCA), is useful for those who have severe speech impairments and who would otherwise not be able to communicate verbally. Grouped under the term “augmentative and alternative communication (AAC),” SGDs and VOCAs can now be integrated into mobile devices such as smartphones.

Stephen Hawking, who suffered from ALS, and also renowned film critic Roger Ebert were among the most well-known users of SGDs using TTS technology.

So, who uses TTS? Many people, for many different reasons. And if you’re looking for a way to solve today’s business challenges, TTS may be the technology you need.

To learn more about ReadSpeaker’s TTS services, check out our FAQ.

Exploring TTS Use Cases

When ReadSpeaker first began synthesizing speech in 1999, TTS was primarily used as an accessibility tool. Text to speech makes written content across platforms available to people with visual impairments, low literacy, cognitive disabilities, and other barriers to access. And while accessibility remains a core value of ReadSpeaker’s solutions, the rise of voice computing has led to an ever-growing range of applications for TTS across devices, especially in business.

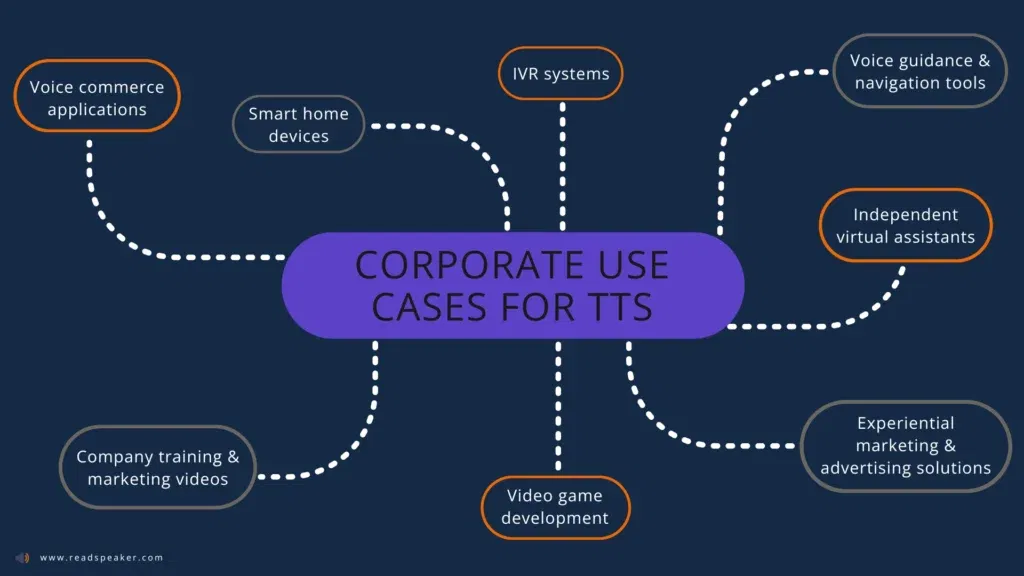

Here are just a few of the powerful corporate use cases for TTS in today’s voice-first world:

- Conversational interactive voice response (IVR) systems, as in customer service call centers

- Voice commerce applications, such as shopping on an Amazon Alexa device

- Voice guidance and navigation tools, like GPS mapping apps

- Smart home devices and other voice-enabled Internet of Things (IoT) tools

- Independent virtual assistants like Apple’s Siri, but for your own brand

- Experiential marketing and advertising solutions, like interactive voice ads on music streaming services or branded smart speaker apps

- Video game development, with dynamic runtime TTS for accessibility features, scene prototyping, and AI non-player characters

- Training and marketing videos that allow creators to change voice-overs without tracking down original voice talent for ongoing recording sessions

- Public transportation systems, including passenger information systems, self-service kiosks, and customer service voicebots

- Fintech, such as virtual banking assistants

- Media production, from podcasts to audiobooks to voiceovers

It’s easy to make a business case for TTS in any of these areas. Text to speech can unlock powerful customer experiences, which translate into rapid returns on your technology investment.

Of course, there are plenty of TTS converters. Why pay for something you can get for free? It turns out there are lots of reasons. Let’s explore just a few.

Free TTS Converters: Why You Should Avoid Them Altogether

If you’re using TTS to meet business goals, a free text-to-speech converter probably isn’t the best choice. That’s true for any of the use cases we discussed in the previous section, but it’s equally true for any commercial project.

How do you know if your project counts as “commercial?”

Just ask one question: Does the offering make you money? If the answer is yes, it’s commercial, and requires a similarly commercial approach to synthesizing speech. That could be adding TTS to a voice-controlled device, building a voicebot, or even adding voice overs to YouTube videos, if they’re ad-supported.

Here’s why commercial users can’t rely on free TTS—and why they should depend on a proven TTS provider like ReadSpeaker, which has been at the forefront of the industry for more than two decades.

1. Professional TTS protects against legal problems.

Most free TTS converters don’t grant commercial licenses. Even if they do, you can’t be sure they have permission from everyone in the voice’s custody chain—a line of stakeholders that stretches all the way back to the recording booth. You see, TTS voices aren’t just lines of code; they all start with a voice actor, whose speech recordings are used to create the synthetic TTS voice that reads your text aloud.

Voice actors have contracts that give them certain rights over how their voices are used, and those rights can create serious liabilities for TTS users, even years down the line. For a high-profile example, just look at TikTok. When the social media app rolled out its TTS feature, it quickly went viral—until Bev Standing, the voice actor from whom the TTS voice was sourced, sued for unlicensed use of her voice. TikTok settled with Standing for an undisclosed amount in 2021.

ReadSpeaker grants commercial licenses and creates all its voices in-house. That allows us to control permissions from the voice actor through to the end user, with documentation at every step of the way.

2. Paid TTS companies provide higher-quality audio.

Professional TTS voices sound better. At ReadSpeaker, we create advanced TTS voices with proprietary deep neural networks, and our team of speech scientists focus on the pronunciation accuracy of every voice before rollout. The result is natural, lifelike machine speech that leads to a measurably better user experience.

But the quality of the TTS voice itself isn’t the only consideration in great-sounding synthetic speech. There’s also the quality of the sound signal itself. ReadSpeaker’s TTS engines allow users to control bit rate and frequency, balancing sound fidelity with bandwidth requirements for each specific use case. That’s an option that’s rarely available from free online TTS converters.

3. Free TTS services are more prone to mispronunciation.

Language is full of tricky pronunciation problems, particularly for computers. How can a TTS engine differentiate between “lead,” as in to lead the way, and “lead,” as in a lead pipe? ReadSpeaker’s TTS engines examine the context of the word, looking at the surrounding language and testing it against a database of common usages. That allows the software to choose the accurate pronunciation in most circumstances. Free TTS converters are unlikely to include an advanced feature like this.

Then there are proper names, industry jargon, new acronyms, and more. ReadSpeaker’s speech scientists continually update a central pronunciation dictionary to keep our products’ speech accurate. Users themselves can create their own TTS pronunciation dictionaries, spelling correct pronunciation phonetically in a simple process with a complicated name: orthographic transcription. That allows commercial users to avoid embarrassments like voicebots that mispronounce their own company names, key industry terms, and more.

Commercial TTS With Custom AI Voices From ReadSpeaker

If you’re concerned about any of these issues, a free TTS converter probably isn’t for you. But there’s another reason companies should choose an established TTS leader like ReadSpeaker: to preserve your brand in voice channels.

A custom TTS voice creates a consistent customer experience across channels, strengthening relationships over time.

A unique TTS voice also differentiates your brand from other companies. Lots of brands use Amazon’s Alexa voice on their smart speaker apps; that makes users think of Amazon, not the brand. Free TTS voices—and most paid ones—may speak for any number of users, including, perhaps, your competitors. An original branded TTS voice sets you apart. Think of it as a sonic logo.

Want to learn more about custom TTS voices and the benefits of this advanced technology? Contact ReadSpeaker today.

So far, we’ve covered a general explanation of TTS, introduced a few common TTS users, and discussed the technology’s implications for business. But no introduction to voice technology is complete without a quick history lesson—or a rundown of the major categories of synthetic speech.

TTS Then and Now

Mechanical attempts at synthetic speech date back to the 18th century. Electrical synthetic speech has been around since Homer Dudley’s Voder of the 1930s. But the first system to go straight from text to speech in the English language arrived in 1968, and was designed by Noriko Umeda and a team from Japan’s Electrotechnical Laboratory.

Since then, researchers have come up with a cascade of new TTS technologies, each of which operates in its own distinct way. Here’s a brief overview of the dominant forms of TTS, past and present, from the earliest experiments to the latest AI capabilities.

Formant Synthesis and Articulatory Synthesis

Early TTS systems used rule-based technologies such as formant synthesis and articulatory synthesis, which achieved a similar result through slightly different strategies. Pioneering researchers recorded a speaker and extracted acoustic features from that recorded speech—formants, defining qualities of speech sounds, in formant synthesis, and manner of articulation (nasal, plosive, vowel, etc.) in articulatory synthesis. Then they’d program rules that recreated those parameters with a digital audio signal.

This TTS was quite robotic; these approaches necessarily abstract away a lot of the variation you’ll find in human speech—things like pitch variation and stresses—because they only allow programmers to write rules for a few parameters at a time. But formant synthesis isn’t just a historical novelty: it’s still used in the open-source TTS synthesizer eSpeak NG, which synthesizes speech for NVDA, one of the leading free screen readers for Windows.

Diphone Synthesis

The next big development in TTS technology is called diphone synthesis, which researchers initiated in the 1970s and was still in popular usage around the turn of the millennium. Diphone synthesis creates machine speech by blending diphones, single-unit combinations of phonemes and the transitions from one phoneme to the next: not just the /c/ in the word cat, but the /c/ plus half of the following /ae/ sound. Researchers record between 3,000 and 5,000 individual diphones, which the system sews together into a coherent utterance.

Diphone synthesis TTS technology also includes software models that predict the duration and pitch of each diphone for the given input. With these two systems layered on one another, the system pastes diphone signals together, then processes the signal to correct pitch and duration. The end result is more natural-sounding synthetic speech than formant synthesis creates—but it’s still far from perfect, and listeners can easily differentiate a human speaker from this synthetic speech.

Unit Selection Synthesis

By the 1990s, a new form of TTS technology was taking over: unit selection synthesis, which is still ideal for low-footprint TTS engines today. Where diphone synthesis added appropriate duration and pitch through a second processing system, unit selection synthesis omits that step: It starts with a large database of recorded speech—around 20 hours or more—and selects the sound fragments that already have the duration and pitch the text input requires for natural-sounding speech.

Unit selection synthesis provides human-like speech without a lot of signal modification, but it’s still identifiably artificial. Meanwhile, throughout all these decades of development, computer processing power and available data storage were making rapid gains. The stage was set for the next era in TTS technology, which, like so much of our current era of computing, relies on artificial intelligence to perform incredible feats of prediction.

Neural Synthesis

Remember the deep neural networks we mentioned earlier? That’s the technology that drives today’s advances in TTS technology, and it’s key to the lifelike results that are now possible. Like its predecessors, neural TTS starts with voice recordings. That’s one input. The other is text, the written script your source voice talent used to create those recordings. Feed these inputs into a deep neural network and it will learn the best possible mapping between one bit of text and the associated acoustic features.

Once the model is trained, it will be able to predict realistic sound for new texts: With a trained neural TTS model—along with a vocoder trained on the same data—the system can produce speech that’s remarkably similar to the source voice talent’s when exposed to virtually any new text. That similarity between source and output is why neural TTS is sometimes called “voice cloning.”

There are all sorts of signal processing tricks you can use to alter the resulting synthetic voice so that it’s not exactly like the source speaker; the key fact to remember is that the best AI-generated TTS voices still start with a human speaker—and TTS technology is only getting more human. Current research is leading to TTS voices that speak with emotional expression, single voices in multiple languages, and ever more lifelike audio quality. Explore the languages and voices available with ReadSpeaker TTS.

The science of TTS continues to advance (and ReadSpeaker’s speech scientists are helping to push it forward). That means there’s always more to learn about TTS—but hopefully we’ve covered the basic text-to-speech meaning and then some in this article. And if you still have questions, follow the links below.

You need human-sounding TTS from a partner you can trust?

Start the conversation with ReadSpeaker today