You’re probably familiar with the Internet of Things. If you have a smart TV, an Apple Watch, or a Ring doorbell camera, IoT is in your home. If you work alongside automated guided vehicles; keep manufacturing lines running with predictive maintenance; or perform surgery with connected eyewear, you even know industrial IoT.

Whether you know it or not, you’re probably also familiar with the Internet of Voice.

Conversational AI is showing up everywhere, from virtual assistants to automated contact centers to interactive streaming ads. That’s the Internet of Voice, a series of connected systems that speak and listen, allowing humans and machines to interact through a simple conversation.

Increasingly, this voice technology is showing up in IoT devices, too—at work, in the home, and on our bodies. You could say IoT and the Internet of Voice have merged, largely thanks to the adoption of IoT voice control.

So how are these voice-activated gadgets poised to change the way we live and work?

For the answer to that question, we asked Aurélien Chapuzet from Vivoka, a company that provides the Voice Development Kit (VDK), an all-in-one solution that includes all the technologies necessary to create end-to-end embedded voice solutions in 40+ languages. Here’s just some of what we learned.

Get an IoT voice control system that reflects your brand.

Contact ReadSpeaker to learn more

The Value of IoT Voice Control

What exactly is IoT voice control? It’s the addition of a voice user interface to an IoT device. This technology allows users to control devices—and interact more broadly—through speech, not touch. Regardless of the specific use case, that’s helpful for a few reasons.

- IoT voice control is easier for users.“Voice is the same revolution we saw when we moved from buttons to touch interactions,” Chapuzet says. “Voice just gives more depth. It’s a more intuitive way to interact with technology.”So keyboards and mouses gave way to touch screens because touch is more natural than operating a keyboard. Speech is the next logical step. When people want information, we ask for it. When we issue an instruction, we speak it. Voice allows us to do the same with our IoT devices, and that’s a powerful shift.

- Voice interaction is heads-up and hands-free. Industrial IoT users don’t always have the luxury of looking down at a tablet. And they can’t always take their hands off what they’re doing.“When you use smart eyewear, you have a small touchscreen on the side of the glasses. That’s handy, but when you’re working with devices in factories or medicine, you need to have both of your hands free,” Chapuzet says. “Voice, in this case, brings hands-free use for any device, which can improve safety and add productivity.” Of course, voice isn’t always the right feature for every product. You don’t always need to be able to issue verbal commands to, say, a toaster (although it would be cool if you could!). But in specific applications, like medicine and industry, voice is an absolute game-changer.

- Voice user interfaces interact with other technologies in powerful ways. Voice technology doesn’t exist in a vacuum, and this technology can work with other advances to solve complex problems. For instance, an IoT system may include a biometric component—including voice recognition, which uses the voice as a form of authentication. That can allow only authorized users to speak to an IoT system in the workplace, for example. A voice user interface may also be enhanced with an audio front end (AFE) system, an initial layer of signal processing that optimizes the speaker’s audio quality—better allowing the machine to understand and capture the instruction. (You might also find an AFE on the TTS output side, helping listeners understand the machine speech, too.)

Headsets that combine vision technology with voice control are also creating a lot of value in many industries; see the sidebar for one example.

Voice picking systems automatically direct order pickers to the next product. A single headset could combine a speaker with a camera to create a fully automated picking system that gives instruction, recognizes products, and updates status in the warehouse management system the instant it moves.

IoT speech recognition could also play a role. If the product is damaged, or there’s some other exception, the worker could simply announce this fact to the system, updating it instantly—and without putting down the item.

“We have a lot of clients in this field, switching from traditional voice-picking headsets to eyewear,” Chapuzet says. “When you add vision to voice, you have two connected technologies that run together, in harmony, and really bring value.”

The benefits of voice in IoT are clear. But how does it work? Chapuzet gives us a peek under the hood of an IoT voice control system.

The Anatomy of a Voice IoT System

The Anatomy of a Voice IoT System

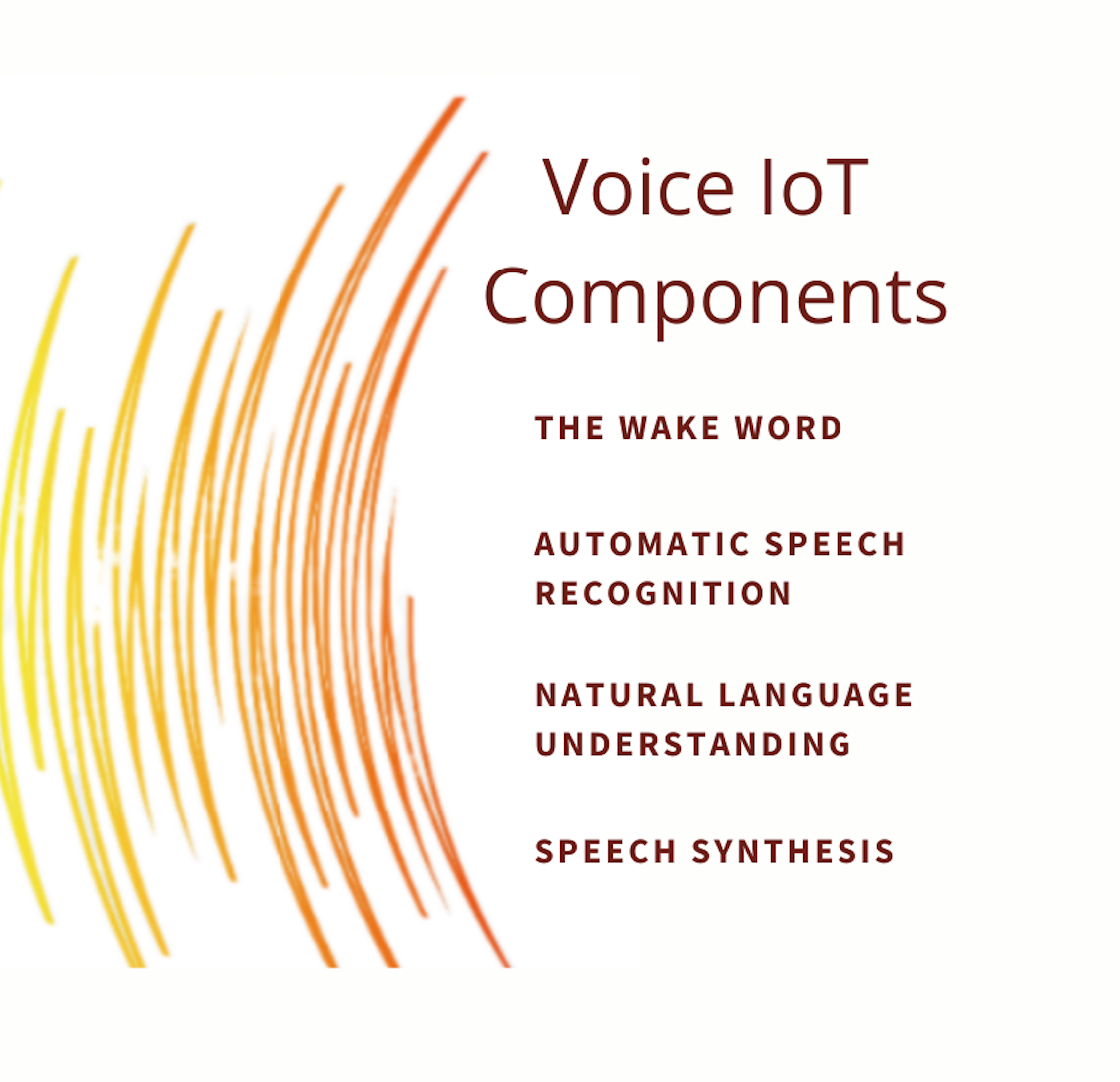

Voice computing isn’t one technology; it’s many. Some IoT devices just use one or two voice technologies. But to build a complete end-to-end conversational system, capable of both receiving input and producing output through voice, you need the following components:

1. The wake word

This element of a voice user interface has gotten a lot of attention, thanks to the companies behind “Hello Google” and “Hey Siri.” That wake word triggers the device to actively record a voice command that will follow; it’s the trigger that begins the interaction.

2. Automatic speech recognition

Now that the system is “awake,” it is able to capture a voice command. Automatic speech recognition (ASR) is the technology that allows the system to “understand” the user’s command by transcribing it from audio frequencies to normalized text.

Indeed, computers don’t understand raw sound; they have to convert the data into a form they can work with. This whole process, realized by ASR engines, can both occur on-device (in the case of Vivoka’s embedded voice technologies), or in the cloud, remotely.

3. Natural language understanding

The previous step translated sound into text. The system still has to process that text to extract meaning. This is accomplished via a type of voice AI called natural language understanding (NLU).

This technology allows the system to extract intent from a group of words. If you were to say to your smart home system, “Turn on the light,” NLU would identify “turn” as an action, “the light” as the object of that action, and so on. That’s how the system knows what task it’s supposed to perform.

4. Speech synthesis

At this stage, the system replies, verbally, to the user’s command. It might say, “Okay,” if it’s just turning on the lights. Or the interaction may trigger a whole conversation. If you ask your smart speaker to “call John,” the system may see that you have several Johns in your contacts list. Then it can ask, “Do you want me to call John A, John B, or John C?”

A dialogue management system controls this conversation on the machine end; text to speech (TTS) provides the digital voice that does the talking.

Where is IoT voice control heading next?

So far, voice technology has focused on software. That software is deployed on IoT devices, certainly, but it often depends on cloud solutions—processing the data on a distant server.

The future will be in voice hardware. We need hardware that’s built from the ground up to leverage voice interaction, says Chapuzet. Some CPUs (think Apple) are already designed precisely for their operating software. Voice should be the same, with computing hardware that’s optimized to support voice on the device, further limiting the requirements of cloud computing.

This sort of embedded voice computing is already available; it’s a big part of what Vivoka’s VDKs provide, and ReadSpeaker TTS is renowned for offering flexible embedded deployment, as well as cloud-based TTS. But voice-first hardware would be a big step for voice technology—and such an advance is right around the corner.

“We’re working with hardware manufacturers to push the voice revolution even further,” Chapuzet says. “This is the big challenge, in my opinion.”

Given the forward march of technology—including IoT voice control—that challenge won’t stick around for long. Meanwhile, ReadSpeaker speech scientists are creating solutions of their own—solutions that bring increasingly lifelike and emotionally expressive TTS voices to embedded, on-premises, and cloud-based voice-first applications. Hear these advances for yourself with our interactive TTS voice demo.

Request TTS Voice Samples

Listen to ReadSpeaker’s neural TTS voices in dozens of languages and personas—or inquire about your very own custom branded voice. Start the conversation today!

Gaea Vilage is an enterprise voice technology strategist with more than 20 years of experience in global voice solutions.

At ReadSpeaker, she supports the adoption of text-to-speech technologies across industries and operational platforms, helping organizations create more accessible, inclusive, and engaging user experiences across embedded, on-premise, cloud, and SaaS-based environments.

She is passionate about the role of voice as one of the most natural and engaging ways for individuals to interact with technology.